The Data Curation Network: discussing and providing solutions for data issues in a collaborative way

13/06/2016

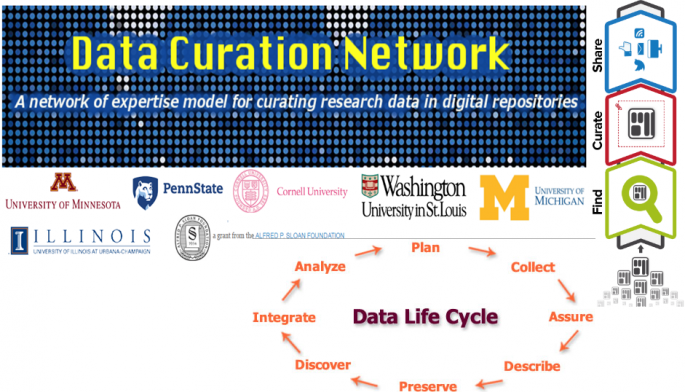

The Data Curation Network project will enable academic institutions to better support researchers that are faced with a growing number of requirements to ethically share their research data.

Why is shared data curation needed?

“In order for data to be fully publicly accessible to search, retrieve, and analyze, specialized curatorial actions must be taken to best prepare these data for reuse including quality assurance, file integrity checks, documentation review, metadata creation for discoverability, and file transformations into archival formats … Due to the heterogeneous and multidisciplinary nature of research data generated in our nation's academic institutions, the skills and expertise required to curate data (to prepare, arrange, describe, and test data for optimal reuse) cannot reasonably be provided by a few experts siloed at single institutions”. (Data Curation Network).

Data Curation Network project

To improve researcher support, the University of Minnesota Libraries will lead efforts (under a grant from the Alfred P. Sloan Foundation) to develop a network for sharing data curation resources and staff across six major academic libraries, whose staff provides data curation services, including preparing digital research data for open access use and reuse.

In particular, the one-year (2016-2017) Data Curation Network project will develop a “network of expertise” model enabling multiple institutions to deliver a unique service by sharing relevant and expert staff: resources, expert advice, practical help to assist academic libraries with effective management of research data at any stage throughout the data lifecycle. The “network of expertise” model for data curation services will expand beyond what any single institution might offer alone by defining a shared approach to address specialized information needs.

The project team is charged with:

- determining how to effectively implement, assess, and sustain a shared model for providing data curation services;

- actively monitoring the metrics involved with curating data (duration, volume, skill sets utilized, and data type frequency);

- presenting findings on budgeting, staffing models, and workflows for data curation relevant to academic libraries;

- involving data curation workflows and best practices (e.g., Research Data Alliance (RDA) Publishing Data Workflows, Stewardship Gap project, DataQ Project), shared staffing models, and skill sets needed for data curators;

-

seeking input from researchers to better understand how data curation services fit into their research workflow and data management needs,

in order to better support researchers in all fields faced with a growing number of requirements to share their research data openly and ethically.

Afterwards, the project team will seek funding to implement a model Data Curation Network as a fixed-term pilot enabling informed and sustainable services across six participating institutions. The model for the Data Curation Network will include the following:

- An implementation plan addressing the procedures necessary to handle the curation workload for a wide-variety of data types and formats as well as the challenges of managing a geographically- and institutionally-distributed staff;

- Baseline measures of current demand for data curation services to forecast the potential workload and effort involved with providing data curation services;

- An assessment plan defining ways to assess the cost-effectiveness, efficiency, and demand (both in data variety and skills utilized) for a Data Curation Network;

- A sustainability plan recommending potential levels of support needed to sustain the Data Curation Network post-implementation and provide the necessary incentives to grow the network beyond initial partners.

There are also some other successful projects and communities promoting models for collaborative community exchange of expertise and staff about data publishing, management (and curation) as well as development of common services. Here are some examples:

- the 2CUL project supporting collection development and cataloging services jointly at Columbia University and Cornell University,

- the Collaboration to Clarify the Costs of Curation (4C) project clarifying the costs of curation,

- EUDAT: the collaborative Pan-European data infrastructure providing research data services, training and consultancy for researchers, research communities, research infrastructures and data centers,

- the World Wide Web Consortium (W3C), an international community where member organizations and the public work together to develop Web standards (e.g. Data on the Web Best Practices) for building collaborative infrastructures;

- the Research Data Alliance (RDA) - formed of experts from around the world - building the social and technical bridges that enable open sharing of data;

- the Global Open Data for Agriculture and Nutrition (GODAN) promoting collaboration to harness the growing volume of data generated by new technologies to solve long-standing problems and to benefit farmers and the health of consumers,

- the Digital Preservation Network consisting of geographically redundant nodes such as the Academic Preservation Trust,

- DuraSpace repository software, such as Fedora and DSpace, whose code base and service models have been developed by a global community.

Do you know about other collaborative initiatives supporting (research) data/service models publication, management and curation? Share your knowledge and experiences here on the AIMS site!

Source:

Launching the Data Curation Network

See also:

PORTAGE: Shared Stewardship of Research Data

Highlights from Data Curation & the EUDAT Collaborative Data Infrastructure workshop at the IDCC16 conference

The FAIR Guiding Principles for scientific data management and stewardship

re3data.org: a global registry of research data repositories

Research Data Curation Bibliography

Show me the money – the path to a sustainable Research Data Facility